Now, if we run node scrapper.js, you should see an output that looks like the below in your console:Ĭheerio is an excellent framework for manipulating and scraping markup contents on the server-side, plus it is lightweight and implements a familiar syntax.

catch((err) => console.log("Fetch error " + err)) To get started, we need to run the npm init -y command, which will generate a new package.json file with its contents like below: `) Familiarity working with the command line and text editorsĬheerio can be used on any ES6+, TypeScript, and Node.js project, but for this article, we will focus on Node.js.Basic familiarity with HTML, CSS, and the DOM.To complete this tutorial, you will need:

Building a sample application (FeatRocket) that scrapes LogRocket featured articles and logs them to the console.Understanding Cheerio (loading, selectors, DOM manipulation, and rendering).Installing Cheerio in a Node.js project.This tutorial assumes no prior knowledge of Cheerio, and will cover the following areas: And apart from parsing HTML, Cheerio works excellently well with XML documents, too. Manipulating and rendering markup with Cheerio is incredibly fast because it works with a concise and simple markup (similar to jQuery). In this article, we will be exploring Cheerio, an open source JavaScript library designed specifically for this purpose.Ĭheerio provides a flexible and lean implementation of jQuery, but it’s designed for the server. Traditionally, Node.js does not let you parse and manipulate markups because it executes code outside of the browser. Feel free to reach out and share your experiences or ask any questions.Elijah Asaolu Follow I am a programmer, I have a life. I’m looking forward to seeing what you build. One thing to keep in mind is that changes to a web page’s HTML might break your code, so make sure to keep everything up to date if you're building applications that rely on scraping. Now that you can programmatically grab things from web pages, you have access to a huge source of data for whatever your projects need. Like the other libraries, I also wrote another tutorial that goes deeper into working with Playwright if you want a longer walkthrough. Try running this code using the other browsers and seeing how it affects the behavior of your script. The advantage to using Playwright is that it is more versatile as it works with more than just one type of browser. This code should do the same thing as the code in the Puppeteer section and should behave similarly.

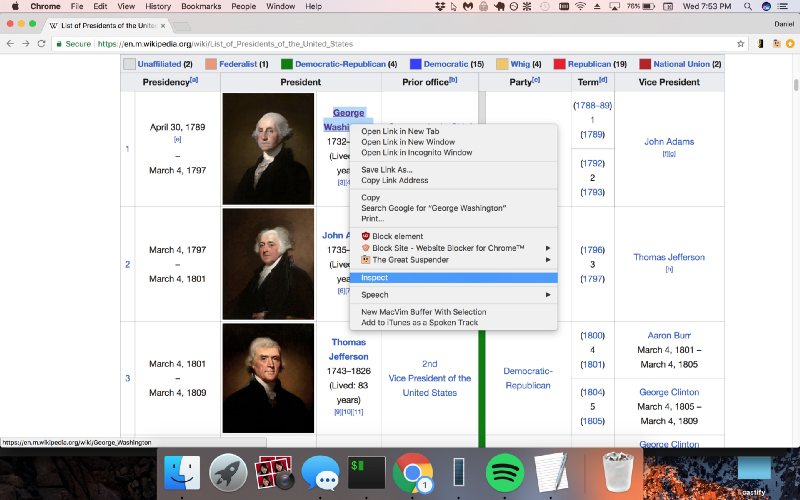

On top of that, if you need a little more granularity, you can write functions to filter through the content of elements, such as this one for determining whether a hyperlink tag refers to a MIDI file: Regular expressions are also very useful in many web scraping situations. This is often done using CSS selectors, which you will see throughout the code examples in this tutorial, to gather HTML elements that fit a specific criteria. You will also frequently need to filter for specific content. If you right-click on the element you're interested in, you can inspect the HTML behind that element to get more insight. There are helpful developer tools available to you in most modern browsers. Every web page is different, and sometimes getting the right data out of them requires a bit of creativity, pattern recognition, and experimentation. Let's try finding all of the links to unique MIDI files on this web page from the Video Game Music Archive with a bunch of Nintendo music as the example problem we want to solve for each of these libraries.īefore moving onto specific tools, there are some common themes that are going to be useful no matter which method you decide to use.īefore writing code to parse the content you want, you typically will need to take a look at the HTML that’s rendered by the browser.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed